AMSTAT Consulting: 19 Years of National Recognition in Dissertation Consulting

OUR DISSERTATION CONSULTING TEAM’S EXPERTISE

Our clients cite the following reasons for choosing our dissertation consulting team:

- We have PhDs from leading universities, such as Harvard, Stanford, and Columbia.

- Our consultants have extensive backgrounds in statistics and over 100 years of practical experience in quantitative and qualitative methods.

- Our editors have over 100 years of practical experience in editing.

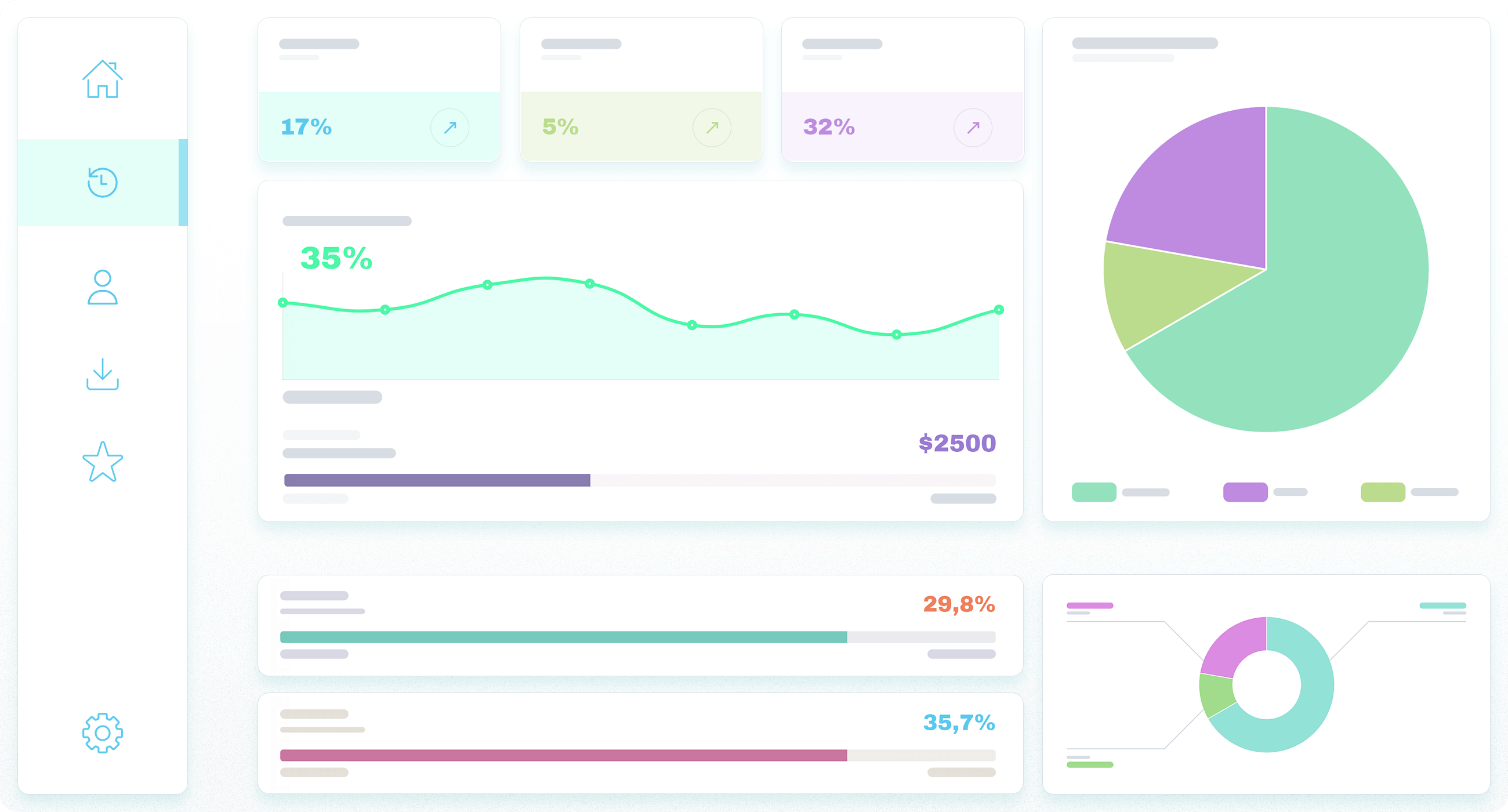

- Our statisticians are experts in statistical analysis (e.g., t-test, ANOVA, ANCOVA, traditional regression, logistic regression, MANOVA, MANCOVA, factor analysis, cluster analysis, survival analysis, time series analysis, correlation analysis, meta-analysis, and more advanced statistical techniques such as structural equation modeling (SEM), hierarchical linear modeling (HLM), and path analysis).

- Our analysts use various statistical software tools, including SPSS, SAS, Stata, HLM, Mplus, R, SPSS Amos, SPSS Modeler, Azure, JMP, Python, WinBUGS, Minitab, SYSTAT, LISREL, EQS, SmartPLS, WarpPLS, EViews, and many others.

- Our analysts are experts in qualitative analysis software, including NVivo, NUD*IST, HyperResearch, Atlas.ti, and MAXQDA.

- We have expertise in the requirements of traditional and online universities.

- We are more reasonably priced than most other consultants offering dissertation help.

- We offer ultra-fast turnaround times, often completing sections within 4-5 business days.

- Over 90% of clients request our assistance more than once, as they are almost universally happy with our different dissertation consulting brands.

- We offer personalized, comprehensive, and friendly support during and after you consult with us.

- We customize our help to assist you in specifying your methodology.

- The Association for Support of Graduate Students highly recommends us, and we are members in good standing of the Statistical Consulting section of the American Statistical Association.

- We participate in the preparation of all five chapters of your dissertation.

- We are committed to quality and excellence, reflected in our record of five-star reviews.

OUR CUSTOMERS LOVE US!

FREE DISSERTATION CONSULTING

UNLOCK YOUR DISSERTATION’S POTENTIAL – FREE PERSONALIZED FEEDBACK SERVICE AVAILABLE NOW!

Embark on a journey to academic excellence with our bespoke dissertation feedback service. Our seasoned team of academics and researchers provides expert advice on the critical aspects of your dissertation, including the introduction, literature review, methodology, data, results, and discussion.

WHY DO OUR CLIENTS CHOOSE OUR FREE FEEDBACK SERVICE?

Personalized Support: Direct, one-on-one feedback on your dissertation, ensuring every piece of advice is tailored to your project’s unique needs.

Expert Insights: Our experts bring years of academic experience to offer you invaluable insights, enhancing the clarity of your research question, the feasibility of your methodology, and the coherence of your literature review.

Success Stories: Join our community of successful graduates— ’The feedback session was transformative for my dissertation!’

DON’T MISS THIS LIMITED OFFER.

Take advantage of this exclusive offer to take the first step towards refining your research with expert guidance. Contact us now to secure your spot.

LOOKING BEYOND THE REPORT.

After receiving our free feedback report, your journey doesn’t have to end there. We offer comprehensive dissertation consulting services to support you from proposal to defense.

TAKE ACTION TODAY.

Are you ready to enhance your dissertation? Contact us today and discover how our expert advice can help you achieve your research goals. With personalized support and insights, we’ll collaborate to elevate your dissertation and achieve excellence.

CONTACT US FOR FREE DISSERTATION CONSULTING

Phone Support: +1 (650) 460-7431

Email: info@amstatisticalconsulting.com

24/7 Chat Support: Immediate assistance via our chat icon in the right corner of our website

Visit Us: 530 Lytton Avenue, 2nd Floor, Palo Alto, CA 94301

Your confidentiality is our priority. Non-disclosure agreements are available upon request.

HOW WE CAN HELP YOU WITH EACH CHAPTER

Don’t let dissertation writing stress you out. Let us help you achieve your academic goals!

Dissertation Consulting

Introduction Chapter

Our dissertation consulting team can help you with any or all of the following steps:

- Identifying and articulating the research problem

- Describing the theoretical construct

- Talking about the nature of the study.

Dissertation Consulting

Literature Review Chapter

Our dissertation consultants can help you with any or all of the following steps:

- Effectively searching, selecting, organizing, and synthesizing articles

- Presenting your literature review in a way that tells a story and aligns with your research questions

- Ensuring the gap in the literature is clearly stated and aligns with the problem statement

- Replacing and updating articles as necessary.

Dissertation Consulting

Methodology Chapter

Our dissertation consulting team can help you with any or all of the following steps:

- Selecting and discussing the research design

- Providing the steps necessary for a qualitative or quantitative study

- Ensuring the data plan and sample size are accurate

- Choosing the correct analyses.

Dissertation Consulting

Results Chapter: Statistical Analysis

Our dissertation consulting team can help you with any or all of the following steps in statistical analysis:

- Getting your dataset ready for analysis

- Inputting, organizing, coding, merging, managing, and cleaning your data

- Creating composite scores and labeling your data

- Dealing with missing data

- Detecting and correcting your database’s inaccurate and unexpected records

- Testing reliability (e.g., Cronbach’s alpha, test-retest reliability, split-half reliability, inter-rater reliability)

- Testing validity (e.g., convergent validity, construct validity, discriminant validity)

- Conducting analyses and assessing their assumptions

- Providing you with syntax and raw output files

- Writing up the results, including tables and figures.

Dissertation Consulting

Results Chapter: Qualitative Analysis

Our dissertation consultants can help you with any or all of the following steps in qualitative analysis:

- Coding your qualitative data

- Identifying themes and sub-themes

- Providing frequencies and percentages for each theme and sub-theme

- Writing up the results, including tables and figures.

Dissertation Consulting

Discussion Chapter

Our dissertation consulting team can help you with any or all of the following steps:

- Interpreting your results

- Discussing your findings’ theoretical and practical implications and their relation to existing literature

- Discussing limitations of the study and recommendations for future studies.

Dissertation Consulting

Statistical Analysis

We can design and perform the required statistical analyses. Here is a sample of some of the analytical tools with which we are familiar:

- T-test

- ANOVA

- ANCOVA

- Traditional regression

- Logistic regression

- MANOVA

- MANCOVA

- Factor analysis

- Survival analysis

- Time series analysis

- Correlation analysis

- Chi-square test

- Meta-analysis

- Cluster analysis

- Advanced techniques such as Structural Equation Modeling (SEM), Hierarchical Linear Modeling (HLM), and Path Analysis

- Design of experiments

- Response surface methodology

- Bayesian analysis

- Latent class analysis

- Longitudinal growth modeling

- Mixture models

- Linear mixed models

- Distribution analysis

- Predictive analytics

- Ensemble analysis

- De-identification

- Trend analysis

- Sensitivity analysis

- Negative binomial regression

- Interim analysis

- Decision trees

- Item analysis

- Nonparametric tests

- Statistical and decision modeling

- MaxDiff

- Segmentation analysis

- Conjoint/discrete choice analysis

- Propensity score analysis

Dissertation Consulting

Software

We have expertise with virtually every statistical and qualitative software package, including:

- SPSS

- SAS

- Stata

- R

- Mplus

- HLM

- Python

- SPSS Amos

- SPSS Modeler

- MATLAB

- JMP

- WinBUGS

- Minitab

- SYSTAT

- LISREL

- EQS

- SmartPLS

- WarpPLS

- EViews

- NVivo 12

- NUD*IST

- HyperResearch

- Atlas.ti.

FIVE-STAR REVIEWS

Zenovia B., 5-star Trustpilot Review

I am very impressed by the professionalism AMSTAT Consulting has provided for my educational research. I have been working with the company for the last few years, and all editing projects were completed promptly with high-quality results. The friendliness and the customer service are excellent. I highly recommend them!

Kulwant K., 5-star Trustpilot Review

They are excellent people. I always got the response in a timely fashion. They never show any frustration. They have the best writers.

Vicki A., 5-star Trustpilot Review

AMSTAT Consulting was recommended to me to edit my dissertation. Their work significantly improved the quality and readability of my writing. While working with them, I found them efficient, and their pleasing way of working gave me great peace of mind. The result was a high-quality dissertation and a distinction for my Ph.D. I recommend AMSTAT Consulting to work its magic on your dissertation.

Rose Z., 5-star Trustpilot Review

This service is one of the best that helped me do my dissertation. AMSTAT Consulting’s consultants provide completed work on time, and quality is what they produce. I recommend this service to all other students looking for writing help. Work with this service if you want a top-quality paper to be presented to you. It is one of the best agencies I have worked with, so you do not have to panic. Try it out and enjoy the results.

Jeremy P., 5-star Trustpilot Review

Excellent job. Highly recommend. I am completely satisfied.

Amy W., 5-star Manta Review

They did a fantastic job on my thesis. The editor made several helpful suggestions. I will use them again.

Ross K., 5-star Manta Review

Your consultants exceeded my expectations, and I am forever thankful for that. My thesis shone above everything I thought it would. All I can say is wow. Wow. Wow. Wow. And thank you.

Amy G., 5-star Manta Review

The service is very professional, and I am pleased about receiving the paper before the due time. I am so glad to pass on AMSTAT Consulting’s services to others I know in the same situation.

Katherine D., 5-star Manta Review

We were delighted with AMSTAT Consulting as an editor, statistician, advisor, and companion. We can highly recommend their work ethic. Their perfectionist approach resolved my fears of writing my dissertation, and I can assure everyone who contemplates working with them: You will be pleased with the final product. It is wonderful to work with consistently reliable people who have a genuine interest and in-depth knowledge of the subject matter and have a way with words. They are very talented.

Shinerena S., 5-star Facebook Review

I would highly recommend AMSTAT Consulting! Amazing customer service! I worked with Ann, who was very knowledgeable! She answered all my questions promptly. The service I requested resulted in a fast turnaround! You won’t be disappointed!

Sophia H., 5-star Facebook Review

Great staff, great service. The main benefit is the level of support and service provided. AMSTAT Consulting has been extremely helpful throughout the process. Thanks, guys!

Ross Z., 5-star BBB Review

I am very impressed with this service because of the seriousness it displays. I got a top-notch paper, and I urge you to keep on with the good spirit.

RECENT QUANTITATIVE PROJECTS

Internet Use

The National Science Foundation funded a project to explore the effect of internet use on perceived performance. With the increasing integration of technology into education and daily life, the client sought to investigate whether the extent of internet use had a discernible effect on how individuals perceived their performance. To comprehensively address this research question, […]

Relative Risk of Lung Cancer

The National Institute of Health funded a research project to address a critical public health concern about lung cancer risk and the intervention of smoking cessation. The study aimed to investigate lung cancer’s relative risk (RR) and its potential variation between the intervention of smoking cessation and control groups over time. The goal was to […]

Mathematics Performance

We were involved in a research project commissioned by the National Science Foundation. The project’s objective was to explore the effect of motivation on students’ mathematics performance. The client, committed to advancing education and understanding the factors that influence academic achievement, wanted to investigate the role of motivation in mathematics performance using data from the […]

RECENT QUALITATIVE PROJECTS

Managers’ Spirituality

The National Science Foundation funded a research project to explore the role of managers’ spirituality in responsible managerial behavior. The client sought to understand the potential role of spirituality in promoting responsible decision-making among managerial staff. To address this issue, we collected diverse data from interviews and observations. Over two years, we gathered data from […]

Prostate Cancer Screening

The National Institute of Health funded a research project aimed at identifying and understanding the barriers that impede individuals from accessing prostate cancer screening, recognizing the importance of early detection in combating prostate cancer. We collected data from 103 participants over a meticulous two-year study comprising interviews and observations to explore this issue comprehensively. The […]

Business Success

The National Science Foundation provided critical funding for a research project aimed at unraveling the role of leadership behavior in the success of businesses. The client recognized leadership’s pivotal role in influencing organizations’ performance, growth, and success. We collected a diverse dataset comprising interviews and observations to explore this multifaceted issue comprehensively. Over a meticulous […]